Find Your Program

Experience real-world learning and prepare for your future.

Affordability

98% of first-time, full-time undergraduate students receive financial aid.

6 Week Blocks

Focus on 1 or 2 classes at a time.

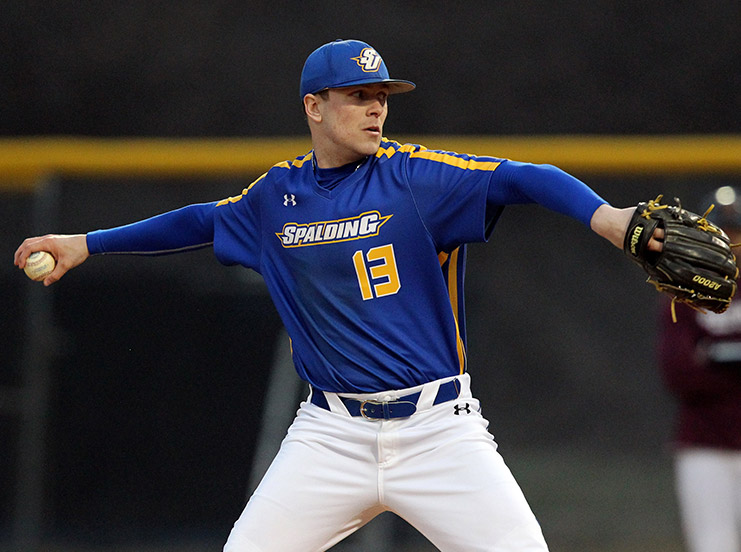

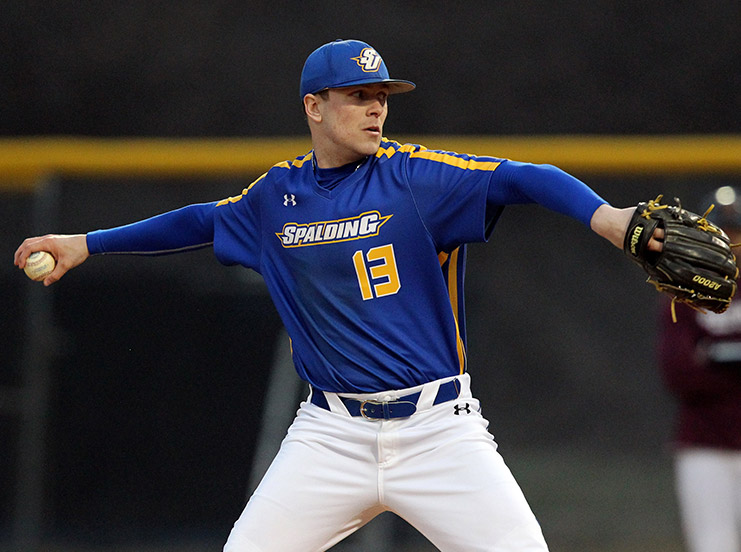

Get to Know Spalding

NOTICE: NOTIFICATION — DATE

DETAILS

Experience real-world learning and prepare for your future.

98% of first-time, full-time undergraduate students receive financial aid.

Focus on 1 or 2 classes at a time.